The Community for Observing AI Agents

Compare AI agent observability tools on what actually matters, pricing, tracing, PII handling, and framework support.

Live Rankings

Ranked by votes from engineers who’ve actually shipped with these tools. Click a row to see the tool’s full profile and the reasoning behind each vote.

What Is Agent Observability?

See what your AI is actually doing. Stop guessing why agents fail. Get end-to-end visibility into every reasoning step, tool call, and model decision, so you can debug faster and ship with confidence.

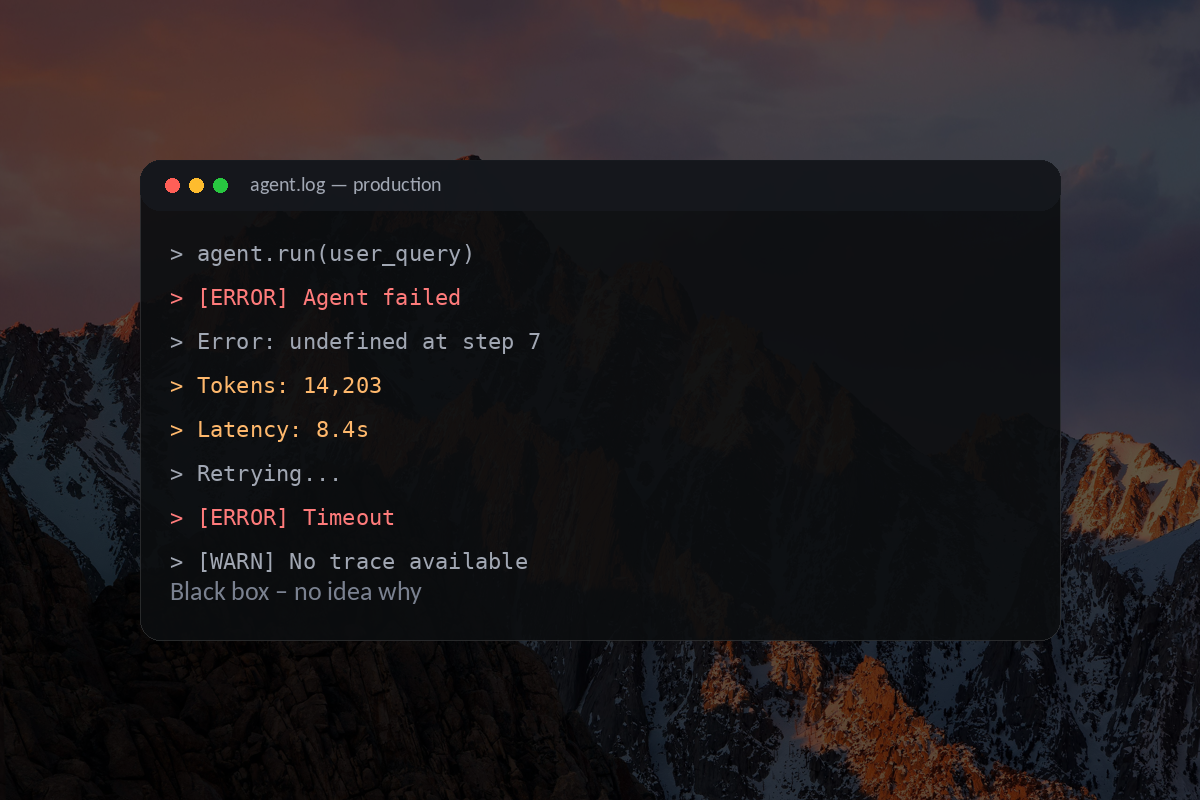

Black box

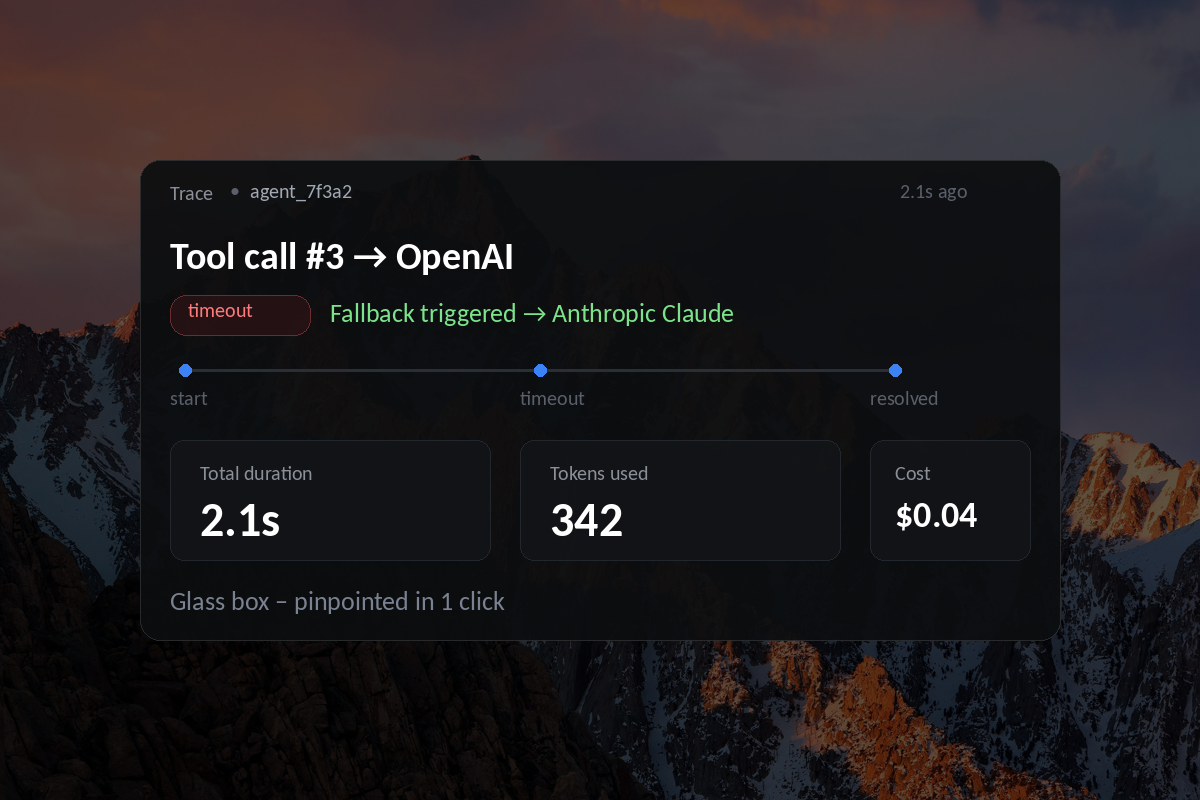

Black box Glass box

Glass boxWhy teams need to observe agents

Agents make decisions, call tools, and chain together calls. Without traces you’re debugging stack traces written in English.

Prevent data leaks

Block PII at trace ingest with rules and field-level redaction.

Debug faster

Searchable traces for every tool call, retry, and timeout.

Build trust

Audit trails — signed, immutable trace exports for compliance.

Optimize costs

Track tokens, latency, and runaway loops per trace.

Langfuse vs Arize vs LangSmith

Same prompts, same agent, three observability stacks. The full comparison matrix grows as engineers vote.

| Dimension | Langfuse | LangSmith | Arize Phoenix (OSS + AX) |

|---|---|---|---|

| Pricing | Free → $29 → $199 → Ent; usage-based; unlimited users; self-host | Free (5k) → $39/seat + usage; gets pricey w/ team | OSS free (no limits); AX ~$50/mo+ usage |

| Tracing | Deep, hierarchical, OTEL-native | Deep, best for LangGraph | Deep, OTEL / OpenInference |

| PII | Rules-based | Rules-based | Rules-based / masking |

| Frameworks | Broad, agnostic (TS/Py, many SDKs) | Best for LangChain / LangGraph | OTEL-first, very broad |

| Strength | Flexible, self-host, cost + evals | Best agent-graph debugging | Eval, drift, RAG monitoring |

Not listed yet?

Get your product in front of engineers actively comparing observability stacks.

Latest from the blog

Incident-by-incident: what broke in production, what observability surfaced (or missed), and how the team fixed it.

Resources we recommend

Hand-picked posts, videos, and threads on AI agent observability — vendor-neutral, practitioner-led.

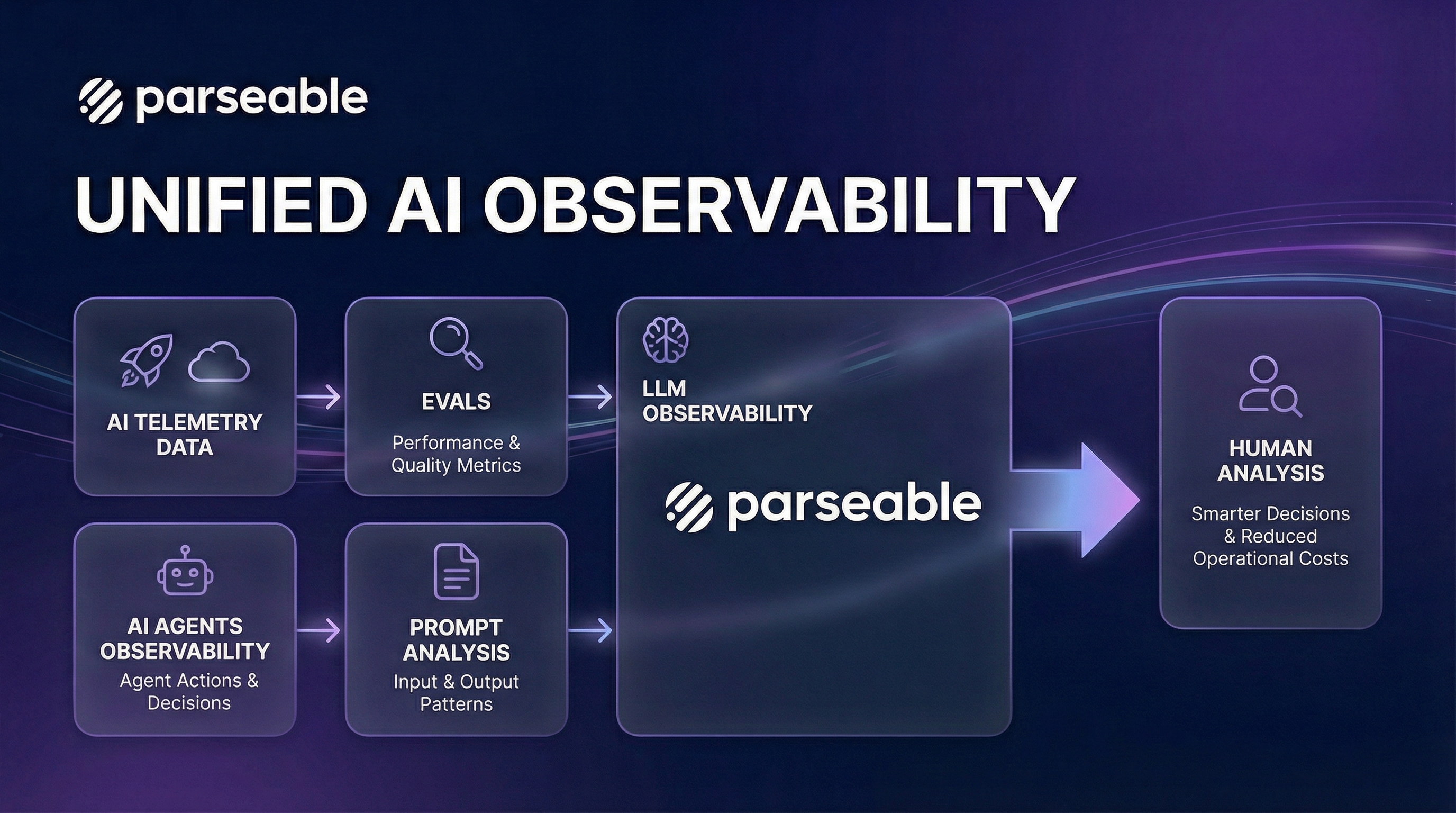

The Three Pillars of Agent Observability: Evals, LLM Monitoring & Prompt Analysis

A comprehensive guide to understanding agent observability across the AI lifecycle — how evals, LLM monitoring, and prompt analysis converge in pre- and post-production.

What is OpenTelemetry? [Everything You Need to Know]

A clear primer on OTel — the Collector, instrumentation, and why everyone in agent observability is converging on it. Good if you're new to OpenTelemetry semantics.

AI Agent Observability Tools: A Practitioner's Map (2026)

Our curated, vendor-neutral map of 35+ agent observability platforms, AMPs, evaluation tools, and SDKs that real teams use today.